Multisensory coding of audiovisual movies in the human hippocampus

Multisensory coding of audiovisual movies in the human hippocampus

Raccah, O.; Agarwal, A.; Zhu, Y.; Turk-Browne, N. B.

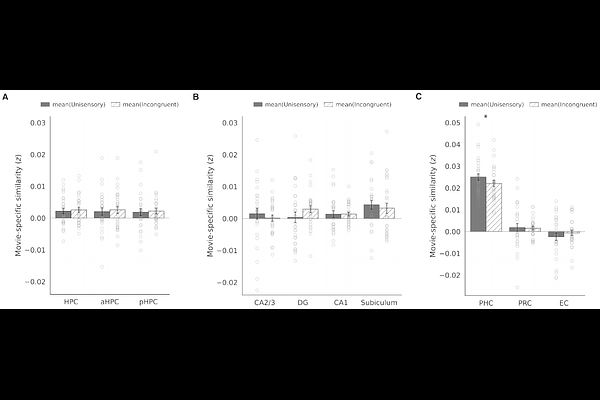

AbstractThe hippocampus receives convergent input from multiple sensory systems, yet in humans it has been studied almost exclusively through vision. Here we examine how the hippocampus contributes to sensory processing beyond the visual modality and to the multisensory integration of visual information with these other modalities. Participants were exposed to multiple repetitions of short naturalistic movie clips, each presented in four formats: auditory only, visual only, congruent audiovisual, and incongruent audiovisual (audio and video from different movies). Using high-resolution fMRI, we measured univariate activation and multivariate representations across the subfields and longitudinal axis of the human hippocampus. Whereas univariate analyses detected only visual responses across hippocampal subfields, with no activation for auditory stimuli and no benefit of congruent stimuli, multivariate analyses revealed robust representations of both auditory and visual scenes. The posterior hippocampus showed enhanced pattern similarity for congruent stimuli relative to unisensory stimuli, demonstrating multisensory facilitation. The anterior hippocampus showed crossmodal decoding between auditory and visual versions of the same clip, suggesting a more abstract representation. Finally, whole-brain searchlight analyses revealed parallel effects in cortical regions known to support multisensory integration. These findings advance understanding of auditory and multisensory coding in the human hippocampus, including the discovery of a functional dissociation along its longitudinal axis, from facilitation in posterior to generalization in anterior.